Background

The ultimate goal of a successful video compression system is to reduce data volume while retaining the perceptual quality of the decompressed data. Standard video compression techniques, such as H.264, HEVC, VP9 and others, have well-known limits and tradeoffs between rate and distortion, especially at low bitrates. Better video compression techniques are essential for more efficient video storage and transmission—the heart of all video streaming applications.

Technology description

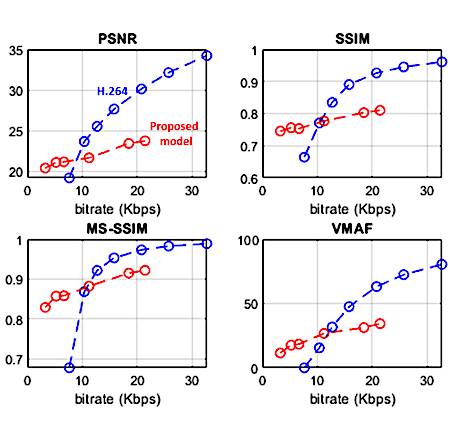

This technology is an efficient and improved video compression technique using a machine learning system. Generative adversarial networks (GANs) and deep neural networks (DNNs) are used in a new and efficient way that is well suited to compress video containing unpredictable or fast movements, especially at low bitrates. The scheme has two stages to capture the best features of both standard codecs and newer DNN-based codecs. The encoder and decoder are trained efficiently, using only a limited number of key frames from a single video, rather than using very many frames from multiple videos. The compression scheme is guided by a soft-edge detector that extracts edges of objects in frames with color information, and a lossless encoder is specifically designed for the soft-edge map. The GAN-based compression engine achieves much higher quality reconstructions than H.264 or HEVC at very low bitrates. Compared to other DNN-based methods, this approach eliminates the requirement of a large volume of training video for motion estimation.

References

Example video: https://www.youtube.com/watch?v=VHk7G5V6iBs

Publications:

- S. Kim et al., "Adversarial Video Compression Guided by Soft Edge Detection" (2018) https://arxiv.org/abs/1811.10673

- S. Kim et al., "Adversarial Video Compression Guided by Soft Edge Detection," in ICASSP 2020 - 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain (2020), pp. 2193-2197, doi.org/10.1109/ICASSP40776.2020.9054165.

U.S. Patent No. 11,388,412 issued July 12, 2022.

Pending U.S. Patent App. No. 17/840,126 filed June 14, 2022.